Coding in Apex is similar to Java/C# in lot many ways, yet so different from them in few other ways. But one thing that is common is that the application of proper design patterns to solve the problem irrespective of platform or technological difference. This is very particular for the apex triggers, because in a typical Salesforce application, triggers play a pivotal role and a major chunk of the logic is driven through the triggers. Therefore a proper design of triggers is very essential, not only for successful implementations, but also for laying out a strong architectural foundation. There are several design patterns proposed like this, this and this. And some of these patterns have gotten mainstream support among the developers and the architects. This article series is going to propose one such design pattern for handling triggers. All these design patterns share a common trait – which is ‘One Trigger to rule them all’ – A phrase that Dan Appleman made it famous in his ‘Advanced Apex Programming‘ . In fact, the design pattern that is being published in this article series is heavily influenced by Dan Appleman’s original design pattern – many thanks to you, Dan. Another design pattern that influenced this implementation was published by Tony Scott and can be found here and I would like thank him as well for his wonderful contribution. I also borrowed couple of ideas from Adam Purkiss such as wrapping the trigger variables in a wrapper class and thank him as well. His training video on Force.com design patterns (available at pluralsight as a paid subscription) is great inspiration and anyone who is serious about taking their development skills on Force.com platform to the next level MUST watch them. That said, let’s dive into the details.

Now, why do we need a yet another design pattern for triggers, while we already have few. Couple of reasons – Firstly, though, the design pattern proposed by Dan and Tony provides a solid way of handling triggers, I feel that there is still room to get this better and provide a more elegant way of handling the triggers. Secondly, both these design patterns require touching the trigger framework code every time when a new trigger is added – the method that I propose eliminates it (Sure, this can be replicated with their design patterns as well, as I leverage the standard Force.com API). Thirdly, this trigger design pattern differentiates the dispatching and handling and provides full flexibility to the developers in designing their trigger handling logic. Last, but not least, this new framework, as the name suggests, it is not just a design pattern; it’s an architecture framework that provides complete control of handling triggers in more predictable and uniform way and helps to organize and structure the codebase in a very developer friendly way. Further, this design pattern takes complete care about dispatching and provides a framework for handling the events; thus the developers need to focus only on the building the logic to handle these events and not about dispatching.

Architecture

The fundamental principles that this architecture framework promotes are

-

Order of execution

-

Separation of concerns

-

Control over reentrant code

-

Clear organization and structure

Order of execution

In the traditional way of implementing the triggers, a new trigger is defined (for the same object) as the requirements come in. Unfortunately, the problem with this type of implementation is that there is no control over the order of execution. This may not be a big problem for the smaller implementations, but definitely it’s a nightmare for medium to large implementations. In fact, this is the exact reason, many people have come with a trigger design pattern that promotes the idea of ‘One trigger to rule them all’. This architecture framework does support this and it achieves this principle by introducing the concept of ‘dispatchers’. More on this later.

Separation of concerns

The other design patterns that I referenced above pretty much promote the same idea of ‘One trigger to rule them all’. But the one thing that I see missing is the ‘Separation of concerns’. What I mean here is that, the trigger factory/dispatcher calls a method in a class which handles all the trigger event related code. Once again for smaller implementations, this might not be a problem, but medium to large implementations, very soon it will be difficult to maintain. As the requirements change or new requirements come in, these classes grow bigger and bigger. The new framework alleviates this by implementing the ‘handlers’ to address the ‘Separation of concerns’.

Control over reentrant code

Many times there will be situation where the trigger code might need to perform a DML operation on the same object, which might end up in invoking the same trigger once again. This can go recursive, but usually developers will introduce a condition variable (typically a static variable) to prevent that. But this is not a elegant solution because, this doesn’t guarantee an orderly fashion of reentrant code. The new architecture provides complete control to the developers such that the developers can either deny the reentrant or allow both the first time call and the reentrant, but in totally separate ways, so they don’t step on each other.

Clear organization and structure

As highlighted under the section ‘Separation of concerns’, with medium to larger implementations, the codebase grows with no order or structure. Very soon, the developers might find it difficult to maintain. The new framework provides complete control over organizing and structure the codebase based on the object and the event types.

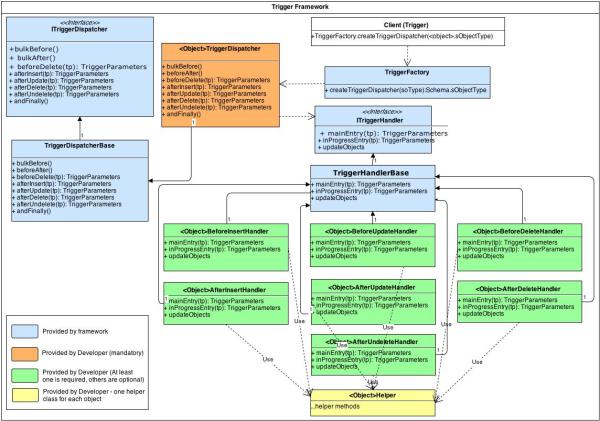

UML Diagram

The following UML diagram captures all the pieces of this architecture framework.

Framework Components

TriggerFactory

The TriggerFactory is the entry point of this architecture framework and is the only line of code that resides in the trigger definition (you may have other code such as logging or something else, but as far as this architecture framework, this will be the only code required). The TriggerFactory class, as the name indicates, is a factory class that creates an instance of the dispatcher object that the caller (the trigger class) specifies and delegates the call to the appropriate event handler method (the trigger event such as ‘before insert’, ‘before update’, …) that the dispatcher provides. The beauty of the TriggerFactory is that it automatically finds the correct dispatcher for the object that the trigger is associated as far as the dispatcher is named as per naming convention and this convention is very simple as specified in the following table.

| Object Type | Format | Example | Notes |

| Standard Objects | <Object>TriggerDispatcher | AccountTriggerDispatcher | |

| Custom Objects | <Object>TriggerDispatcher | MyProductTriggerDispatcher | Assuming that MyProduct__c is the custom object, then the dispatcher will be named without the ‘__c’. |

It accomplishes this by using the new Type API. Using the Type API to construct the instances of the dispatchers helps to avoid touching the TriggerFactory class every time a new trigger dispatcher is added (ideally only one trigger dispatcher class is needed per object).

Dispatchers

The dispatchers dispatch the trigger events to the appropriate event handlers to handle the trigger event(s). The framework provides the interface and a base class that provides virtual implementations of the interface methods, but the developers need to provide their own dispatcher class, which is derived from either the virtual base class for each object that they want to have this framework applied. Ideally, the developers want to inherit from the TriggerDispatcherBase, as it not only provides the virtual methods – giving the flexibility to the developers to implement the event handlers only that they are interested in their dispatcher class, but also the ability to provide reentrant feature to their logic.

ITriggerDispatcher

As discussed above, the ITriggerDispatcher essentially contains the event handler method declarations. The trigger parameters are wrapped in a class named ‘TriggerParameters’.

TriggerDispatcherBase

The TriggerDispatcherBase class implements the interface ITriggerDispatcher, providing virtual implementations for those interface methods, so that the developers need not implement all the event handlers that they do not wish to use. The TriggerDispatcherBase also has one more important method named ‘execute’ which controls if a call has to be dispatched in reentrant fashion or not. It has a separate member variable for each event to hold the instance of the trigger handler for that particular event which the ‘execute’ method utilizes to control the reentrant feature.

<Object>TriggerDispatcher

The trigger dispatcher classes contains the methods to handle the trigger events and this is the place where the developers had to instantiate the appropriate trigger event handler classes. At the heart of the dispatcher lies the ITriggerDispatcher interface which provides an interface for the developers to implement the appropriate dispatcher for the objects. The interface provides definitions for all trigger events, which means that the trigger dispatcher that the developers implement should implement methods for all the events. However, since it may not necessary to provide implementations for all trigger events – the framework provides a base class named ‘TriggerDispatcherBase’ that provides default implementation (virtual methods) to handle all events. This allows developers to implement the methods for only the events that they really have to, by deriving from TriggerDispatcherBase instead of implementing the ITriggerDispatcher interface, as the TriggerDispatcherBase implements this interface. One more reason that the developer wants to derive from TriggerDispatcherBase instead of ITriggerDispatcher is because the TriggerDispatcherBase.execute method provides the reentrant feature and the developer will not be able to leverage this feature if the trigger dispatcher for the objects do not derive from this class.

It is very important that the trigger dispatcher class to be named as per the naming convention described under the TriggerFactory section. If this naming convention is not followed, then the framework will not be able find the dispatcher and the trigger class would throw an exception.

Understanding the dispatcher is really critical to successfully implement this framework, as this is where the developer can precisely control the reentrant feature. This is achieved by the method named ‘execute’ in the TriggerDispatcherBase which the event handler methods call by passing an instance of the appropriate event handler class. The event handler methods sets a variable to track the reentrant feature and it is important to reset it after calling the ‘execute’ method. The following code shows a typical implementation of the event handler code for ‘after update’ trigger event on the Account object.

1: private static Boolean isAfterUpdateProcessing = false;

2:

3: public virtual override void afterUpdate(TriggerParameters tp) {

4: if(!isAfterUpdateProcessing) {

5: isAfterUpdateProcessing = true;

6: execute(new AccountAfterUpdateTriggerHandler(), tp, TriggerParameters.TriggerEvent.afterUpdate);

7: isAfterUpdateProcessing = false;

8: }

9: else execute(null, tp, TriggerParameters.TriggerEvent.afterUpdate);

10: }

In this code, the variable ‘isAfterUpdateProcessing’ is the state variable and it is initialized to false when the trigger dispatcher is instantiated. Then, inside the event handler, a check is made sure that this method is not called already and the variable is then set to true, to indicate that a call to handle the after update event is in progress. Then we call the ‘execute’ method and then we are resetting the state variable. At the outset, this (resetting the state variable to false) may not seem very important, but failure to do so, will largely invalidate the framework and in fact in most cases you may not be able to deploy the application to production. Let me explain this – when a user does something with an object that has this framework implemented, for example, saving a record, the trigger gets invoked, the before trigger handlers are executed, the record is saved and the after trigger event handlers are executed and then either the page is refreshed or redirected to another page depending on the logic. All of this happens in one single context. So, it might look like the state variable such as ‘isAfterUpdateProcessing’ needs to be set to true inside the if condition.

Handlers

Handlers contains the actual business logic that needs to be executed for a particular trigger event. Ideally, every trigger event will have an associated handler class to handle the logic for that particular event. This increases the number of classes to be written, but this provides a very clean organization and structure to the code base. This approach proves itself – as in the long run, the maintenance and enhancements are much easier as even a new developer would know exactly where to make the changes as far as he/she gets an understanding on how this framework works.

Another key functionality of the handlers is that the flexibility it gives to the developers to implement or ignore the reentrant functionality. The ‘mainEntry’ method is the gateway for the initial call. If this call makes a DML operation on the same object, then it will result in invoking this trigger again, but this time the framework knows that there is a call already in progress – hence instead of the ‘mainEntry’, this time, it will call the ‘inProgressEntry’ method. So if reentrant feature to be provided, then the developer need to place the code inside the ‘inProgressEntry’ method. The framework provides only the interface – the developers need to implement this interface for each event of an object. The developers can chose to ignore to implement the event handlers, if they are not going to handle the events.

ITriggerHandler

The ITriggerHandler defines the interface to handle the trigger events in reentrant fashion or non-reentrant fashion.

TriggerHandlerBase

The TriggerHandlerBase is an abstract class that implements the interface ITriggerHandler, providing virtual implementation for those interface methods, so that the developers need not implement all the methods, specifically, the ‘inProgressEntry’ and the ‘updateObjects’ methods, that they do not wish to use.

<Object><Event>TriggerHandler

As discussed above, the developer need to define one class per event per object that implements the ITriggerHandler interface. While there is no strict requirement on the naming convention like the dispatcher, it is suggested to name as per the following convention.

| Object Type | Format | Example | Notes |

| Standard Objects | <Object><Event>TriggerHandler | AccountAfterInsertTriggerHandler, AccountAfterUpdateTriggerHandler, etc. |

|

| Custom objects | <Object><Event>TriggerHandler | MyProductAfterInsertTriggerHandler | Assuming that MyProduct__c is the custom object, then the handler will be named without the ‘__c’. |

So, if we take the Account object, then we will have the following event handler classes to be implemented by the developer that maps to the corresponding trigger events.

| Trigger Event | Event Handler |

| Before Insert | AccountBeforeInsertTriggerHandler |

| Before Update | AccountBeforeUpdateTriggerHandler |

| Before Delete | AccountBeforeDeleteTriggerHandler |

| After Insert | AccountAfterInsertTriggerHandler |

| After Update | AccountAfterUpdateTriggerHandler |

| After Delete | AccountAfterDeleteTriggerHandler |

| After UnDelete | AccountAfterUndeleteTriggerHandler |

Note that NOT all the event handler classes, as defined in the above table, needs to be created. If you are NOT going to handle a certain event, such as, ‘After Undelete’ for the Account object, then you do not need to define the ‘AccountAfterUnDeleteTriggerHandler’.

TriggerParameters

The TriggerParameters class encapsulates the trigger parameters that the force.com platform provides during the invocation of a trigger. It is simply a convenience, as it avoid repeating all those parameters typing again and again in the event handler methods.

<Object>Helper

Often, there will be situations where you want to reuse the code from different event handlers such as sending email notifications. After all one of the fundamental principle of object oriented programming is code re-usability. In order to achieve that, this architecture framework proposes to place all the common code in a helper class so that not only the event handlers, but also the controllers, scheduled jobs, batch apex jobs can use the same methods, if necessary. But this approach is not without its caveats; for e.g. if the helper method slightly varies based on the event type, then how would you handle that? Do you pass the event type to the helper method, so that the helper method uses condition logic? There’s no right or wrong answer – but personally, I think it’s not a good idea to pass the event types to the helper methods; And for this example, you can just pass a flag and that can solve the issue. But for other types of situations, passing a flag may not be enough – you need to think little smarter and I’ll leave it to the reader as the situation is totally unique to their requirements.

Using the framework to handle updates

The framework comes with a default implementation to handle the updates. It achieves this by adding a Map variable to hold the objects to be updated and all that the developer needs to do is to just add the objects to be updated to this map in their event handlers. The TriggerHandlerBase abstract class has the default implementation to update the objects from this map variable which is called by the ‘execute’ method in the TriggerDispatcherBase. Note that I chose to call the updateObjects method only for the ‘mainEntry’ and not for the ‘inProgressEntry’ simply because I didn’t have the time to test it.

Another thing to note is that since the framework will utilize the helper classes to get things done, sometimes the objects that you need to update may be handled in these helper classes. How would you handle that? I suggest design your helper methods to return those objects as a list and add them to the map from your event handler code, instead of passing the map variable to the helper class.

This framework can be easily extended to handle the inserts and deletes as well. To handle the insert, add a List variable to the TriggerHandlerBase and provide a virtual method named ‘insertObjects’ that will insert the list and call it from the ‘execute’ method on the TriggerHandlerBase. I’ll update the code when time permits and for the meanwhile, I’ll leave this to the reader to implement for their projects.

Note that it is not possible to do upsert in this way, because force.com platform doesn’t support upserting generic objects and since our map uses the generic object (sObject), this is not possible. (Thanks to Adam Purkiss for pointing out this fact).

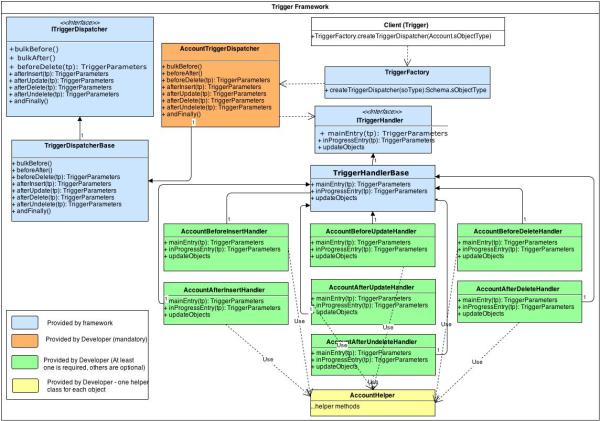

Example

To illustrate this design pattern, the following diagram depicts how this architecture framework will apply for the account object.

The trigger architecture framework project is available as open source and hosted at google code.The source code for this entire framework, along with a dummy implementation which includes classes for handling trigger events on the Account object, is available as an app exchange package. If you need it as a zip file, then it can be downloaded from here. The code documentation is built using apexdoc and can be downloaded from here.

Summary

The new trigger architecture framework will provide a strong foundation on the force.com platform to build applications that will provide multiple benefits as outlined previously. The framework does involve many parts and the business solutions built using this framework need to follow certain rules and conventions and the number of classes written will be little high – but the benefits from this approach will easily out-live the effort and the investments. It may be still overkill for very smaller implementations, but it will prove its usefulness for medium to larger implementations.

Tagged: Apex, Apex Trigger, Apex Trigger Architecture, Apex Trigger Design Pattern, Architecture, Architecture Framework, Design Patterns, Force.com, Salesforce, Salesforce Objects, Salesforce Trigger Architecture

Very comprehensive.

Thanks Sushant.

Excellent work Hari. This is definitely the next logical development with the trigger architecture. You’ve provided an elegant and comprehensive treatment on the subject and I look forward to trying it out. Thanks for this great contribution.

Thanks Adam. I implemented this architecture framework in two force.com applications and the results are amazing. I hope the community will benefit from this.

Regards,

Hari Krishnan.

Reblogged this on Sutoprise Avenue, A SutoCom Source.

Referenced this post One Trigger to Rule Them All

Very nice. Full write up of my thoughts here: http://advancedapex.com/2013/07/26/interesting-trigger-framework/

Love your comment. Thanks Dan.

[…] was thrilled to see the other day a blog post by Hari Krishnan called “An architecture framework to handle triggers in the Force.com platform”. It’s beautiful piece of work (and I do appreciate the shout out). As with our own framework, […]

Thanks Hari for this post… awesome!

You may have saved my “bacon” on a current project Hari! I’ve referenced your project on Salesforce StackExchange in [this post](http://salesforce.stackexchange.com/questions/14639/difficulties-with-applemans-trigger-classes)

Thanks cloudmech. Glad that it worked out.

Hari, how did you envision calling public virtual void updateObjects() when you want to do multiple DML operations in the same execution context (a feature I very much need)? Its not clear to me how to pass “sObjectsToUpdate” from one class to another without interrupting the execution context and triggering another one. Did you intend to use a public or public static variable, add a separate class for it, or something else? Its understandably not referenced in your Trigger Parameters Class.

It is possible to update multiple objects in the same execution context. Let me explain about this. You can use the updateObjects in two different ways:

1. Since sObjectsToUpdate is declared as protected in TriggerHandlerBase, you can use it in your event handler class (such as AccountAfterInsertTriggerHandler) to add the necessary objects that you want to update. Since this is declared as a map – so you can mix different type of objects (for e.g. Account, Opportunity or any other custom objects) and add them to the map. Then you can call the updateObjects method defined in the base class from your handler. The drawback is that you don’t have any exception handling since this is defined in the base class ( and it has to be generic since every other handler class may use this) – this is fine if you are okay to handle that way. But I guess in most cases, you may want to custom handle the exception logic.

2. The second method is to override the updateObjects in your handler, so that you can handle the exceptions the way you want. You can also add other custom logic such as initiating batch apex or web service call.

If your logic resides in a multiple separate classes which you want to update using the updateObjects, then I suggest make those methods in those classes to return the objects that you want to update and call those methods from your handler. And then the returned objects to the map.

For e.g.

AccountAfterInsertTriggerHandler

——————————————

public override void mainEntry(TriggerParameters tp) {

List listAccounts = AccountHelper.getAccounts();

List listOpps = OpportunityHelper.getOpportunities(listAccounts);

for(Account acct : listAccounts)

sObjectsToUpdate.put(acct.Id, acct);

for(Opportunity opp : listOpps)

sObjectsToUpdate.put(opp.Id, opp);

}

AccountHelper

—————–

public static List getAccounts() {

…..

}

OpportunityHelper

———————-

public static List getOpportunities() {

…..

}

Okay – I think I missed two things to put together in the framework.

1. I deliberately chose to not to include the helper class, but now I guess it is better to include that in the diagram. Basically, it’s a good practice to have a helper method for each object so that all methods that will be consumed by the logic related to that object, wherever it be – controller, event handlers,… – can reside in this helper class to achieve code reusability.

2. I missed calling updateObjects from the dispatcher. In order to have the above piece work, you need to call the updateObjects in the TriggerDispatcherBase.execute as a last statement.

I’ll add these two things when I get a chance.

Hope this information helps.

Regards,

Hari Krishnan.

I made the changes both to the code and the UML diagram and updated the article. The new code base is available under Google code (I’ll update the unmanaged package later).

Hi Hari Krishnan! Thanks for this great article. A couple years have passed yet it’s still very relevant!

This is my first attempt at real OOP and your framework is a nice introduction.

Would you be able to update the managed package as detailed above?

Read this article and i have experience of 2 years just drafting triggers and helper classes in my job .Really interesting and i am sure this architecture will be huge benefit for larger apps were triggers are more in number !Excellent blog i have read so far on triggers .Thank you!

Thanks Mohit.

I Hari, this is a fantastic write-up. I look forward to implementing it shortly. A quick question… I like to break up the methods when there is more than one trigger action I wish to perform. I’ll build on your example above, but am wondering what your thoughts are on how to use the one “sObjectsToUpdate” map for all methods.

AccountAfterInsertTriggerHandler

——————————————

public override void mainEntry(TriggerParameters tp) {

List listAccounts = AccountHelper.getAccounts();

updateAccountStatus(tp, listAccounts);

updateAccountPriority(tp, listAccounts);

}

private void updateAccountStatus(TriggerParameters tp, List listAccounts) {

for (Accounts acc : listAccounts) {

if (sObjectsToUpdate.containsKey(a.Id)) {

acc = sObjectsToUpdate.get(acc.Id);

}

// do something

sObjectsToUpdate.put(acc.Id, acc);

}

}

private void updateAccountPriority(TriggerParameters tp, List listAccounts) {

for (Accounts acc : listAccounts) {

if (sObjectsToUpdate.containsKey(a.Id)) {

acc = sObjectsToUpdate.get(acc.Id);

}

// do something

sObjectsToUpdate.put(acc.Id, acc);

}

}

AccountHelper

—————–

public static List getAccounts() {

…..

}

Something like this? Let’s say you modify records [0] and [1] in the first method and you modify records [0] and [2] in the second method. I’d want to be sure that the second method doesn’t overwrite the changes the first method makes to record [0]… Thoughts?

Nathan,

1. Using the sObjectsToUpdate map to update all records of same object or multiple objects has both pros and cons. If you don’t have to handle any special logic if an exception happens, then probably, using this map comes very handy. On the other side, if you want to handle the exception and perform any logic or if you want to use savepoints and rollback, then probably, it is better not to use this map and it’s corresponding method.

2. If you modify records[0] and [1] in the first method and modify records [0] and [2] in the second method, the later will definitely overwrite and you will loose the changes that you made in the first method, if your second method updates the same field updated by the first method.

Understood and thanks for the clarification. I actually don’t mind the one map to update all as I do not (of yet) have the need for exception handling. That said, I think this can be done in the TriggerHandlerBase (but maybe this is my inexperience talking). I’ve modified that class to add a few methods:

I added processObjects() to give me the default behavior of “do all” but to give me the granular override ability to change order if/when needed. I also replaced “updateObjects()” with “processObjects()” in the TriggerDispatcherBase class.

I added methods for “addToInsert()” and more so that I don’t use “sObjectsToInsert.add()” directly in the Handlers themselves. This allows me to standardize the logic (read: error handling, though I haven’t build this out yet). What I’ve built to this point is simply a way to allow for two methods updating the same sObject record (assuming different fields at this point).

public abstract class TriggerHandlerBase implements TriggerHandler {

protected List sObjectsToInsert = new List();

protected Map sObjectsToUpdate = new Map();

protected Map sObjectsToDelete = new Map();

protected List sObjectsToUndelete = new List();

public virtual void processObjects() {

insertObjects();

updateObjects();

deleteObjects();

undeleteObjects();

}

public void insertObjects() {

if(sObjectsToInsert.size() > 0)

insert sObjectsToInsert;

}

public void updateObjects() {

if(sObjectsToUpdate.size() > 0)

update sObjectsToUpdate.values();

}

public void deleteObjects() {

if(sObjectsToDelete.size() > 0)

delete sObjectsToDelete.values();

}

public void undeleteObjects() {

if(sObjectsToUndelete.size() > 0)

undelete sObjectsToUndelete;

}

public void addToInsert(sObject so, Map values) {

for (String value : values.keySet()) {

so.put(value, values.get(value));

}

sObjectsToInsert.add(so);

}

public void addToUpdate(sObject so, Map values) {

if (!sObjectsToUpdate.containsKey(so.Id)) {

sObjectsToUpdate.put(so.Id, UtilsGeneral.newSObject(so));

}

for (String value : values.keySet()) {

sObjectsToUpdate.get(so.Id).put(value, values.get(value));

}

}

public void addToDelete(sObject so) {

if (!sObjectsToDelete.containsKey(so.Id)) {

sObjectsToDelete.put(so.Id, UtilsGeneral.newSObject(so));

}

}

public void addToUndelete(sObject so) {

sObjectsToUndelete.add(so);

}

}

I will eventually try to add in some error handling here, if possible, and clean up the logic a bit, but this is my first pass.

Thoughts?

http://nwilliamsscu.blogspot.com/

Very interesting. I guess this approach definitely adds lot of value to this framework and can make life better for developer. Using map for inserts is also a good idea as this allows to combine inserts of various objects in one single call (like the ‘update’). The only reason that I see where this approach cannot be used is handle the exception in a specific way, specifically, when someone want to use savepoints and rollback. Otherwise, this is excellent. I haven’t had a chance to play with this, but will try to do.

I recently ran into an issue where order of insertion and order of update made a distinct difference. Since the framework inserts all sObjects at once in one bulk operation, there are a few problems you run into: chunking and order of processing.

The chunking can be resolved by grouping the sObjects by sObjectType prior to commit and the order of processing can be handled by ordering those groupings in a logical fashion.

My need required I insert top-down in a given hierarchy. Outside of the five objects listed below, it doesn’t matter, but these five must be in a defined order.

Here is a modified method, which handles the update section:

public void updateObjects() {

System.debug(‘##### Method variable: andFinally().sObjectsToUpdate = ‘ + sObjectsToUpdate.size());

if (!sObjectsToUpdate.isEmpty()) {

Integer counter = 0;

Map orderOf = new Map();

orderOf.put(orderAccount, ‘Account’);

orderOf.put(orderOpportunity, ‘Opportunity’);

orderOf.put(orderOpportunityLineItem, ‘OpportunityLineItem’);

orderOf.put(orderSchedule, ‘Schedule__c’);

orderOf.put(orderScheduleLine, ‘Schedule_Line__c’);

Map getOrder = new Map();

for (Integer i : orderOf.keySet()) {

getOrder.put(orderOf.get(i), i);

}

counter = orderOf.size();

Map<String, List> mapOf = new Map<String, List>();

for (sObject so : sObjectsToUpdate.values()) {

String objName = UtilsGeneral.getSObjectTypeName(so);

System.debug(‘##### Method variable: updateObjects().objName = ‘ + objName);

System.debug(‘##### Method variable: updateObjects().mapOf.containsKey(‘ + objName + ‘) = ‘ + mapOf.containsKey(objName));

if (!mapOf.containsKey(objName)) {

System.debug(‘##### Method variable: updateObjects().mapOf.containsKey(‘ + objName + ‘) = ‘ + mapOf.containsKey(objName));

mapOf.put(objName, new List());

if (getOrder.containsKey(objName)) {

orderOf.put(getOrder.get(objName), objName);

} else {

orderOf.put(counter, objName);

counter++;

}

}

System.debug(‘##### Method variable: insertObjects().so = ‘ + so);

mapOf.get(objName).add(so);

}

for (Integer i = 0; i < orderOf.size(); i++) {

String obj = orderOf.get(i);

if (mapOf.containsKey(obj)) {

System.debug('##### Method variable: updateObjects().i = ' + i);

System.debug('##### Method variable: updateObjects().obj = ' + obj);

System.debug('##### Method variable: updateObjects().mapOf.get(obj) = ' + mapOf.get(obj));

update mapOf.get(obj);

}

}

counter = 0;

orderOf = new Map();

getOrder = new Map();

mapOf = new Map<String, List>();

}

sObjectsToUpdate = new Map();

}

@Nathan Williams, it’s wonderful discussion. I am also looking for a way to allow for two methods updating the same sObject record (assuming different fields at this point). Can you please elaborate more on addToUpdate(sObject so, Map values) method. From where this method should called ? and what are input parameters for this method. can you also please provide one example. I am also looking for UtilsGeneral class but I didn’t find here. Can you please post this class also.

@Subba UtilsGeneral methods:

public static String getSObjectTypeName(sObject obj) {

return obj.getSObjectType().getDescribe().getName();

}

public static sObject newSObject(sObject obj) {

return obj.getSObjectType().newSObject();

}

@Subba, I’m glad you are enjoying the conversation! My framework above actually handles what you are looking for beautifully. Since you are passing along a map of the fields to update, and since you have stored the object Id in the sObjectsToUpdate map, all changes are cumulative.

Try it out for yourself! Basically, every time you call addToUpdate(obj, new Map{field => value}) you will be adding that field to the object you are about to update. Let’s pretend:

addToUpdate(myContact, new Map{field1 => value1};

… now that you’ve made your first call: sObjectsToUpdate = Contact(field1 = value1)

… now do another call to that method:

addToUpdate(myContact, new Map{field2 => value2};

… it adds: sObjectsToUpdate = Contact(field1 = value1, field2 = value2)

… this is when the fields are different. What if you update the same field? The most recent call will be the winner (each call will overwrite the previous value)… make sense? I use this all the time, as it ensures only one big, cumulative update per record…

@Williams, Thank you for your prompt response.I have one question .

What data type should we declare for the Map{field1 => value1}.

Do we have any generic data type to map the field value

Map = Map.

@Williams, I mean to say what would be the data type for Value in map declaration. Map{Field, Value} = Map{String, ? }

@Subba good point. That is a limitation of these replies (it doesn’t like the [lessthan] and [greaterthan] tags and kills whatever is between them… It should be Map{String, Object}

@Williams, I end up with some other issues here while calling addToUpdate() method and while adding elements to sObjectsToUpdate map. can you please provide one working example

@Nathan Williams and @Hari. It’s good to see this discussion on error handling. I’ve been looking for material on that very subject! Unfortunately, I’ve found very little. I’m about to write a class to do all the error handling for the triggers in an app we’ve been working on and have been contemplating numerous related issues that you’ve touched on. My trigger code can handle the exceptions, but I don’t want it doing DML to report it, thus the need for a common error handling class.

As an example, I especially don’t want a trigger that does an update to Event doing DML to say Opportunity to report on errors from the data it received after completing the DML operation for Event! In some cases, it could need to do both or on some other object; perhaps even send an email! I’d much rather offload that work to an error handler class once those exceptions (or simple errors in data input that weren’t detected by the UI for that matter) have all been caught and collected in the current context.

The Architect I work with strongly believes that a trigger should never cause an error to appear in the UI. I have mixed feelings on that one, yet understand where he’s coming from and generally agree with him. No matter how hard we try to lock things down in the UI, all it takes is a new Admin to open the doors to all kinds of problems that can create havoc with improperly formatted data or text being sent instead of date/time data, etc. So I get where he’s coming from. There’s much we simply can’t control. At the same time, an unresponsive console that provides no clue as to what’s wrong isn’t very helpful to a user either.

I’ve had to code things down to the level of including profile IDs and RecordTypeIDs in order to help prevent some of these types of problems where Admins tinker with changing pages that our app uses; thus opening them to use by the wrong users or input of data in the wrong formats. Needless to say, this all needs to be reported in an efficient way so those records can be manually corrected if the trigger isn’t going to be permitted to say “sorry, you can’t do that User!”.

Would love to hear thoughts on implementing an error handler class that would work well with Hari’s architecture!

@Cloudmech – nice thoughts!!!. Unlike the .NET platform, where in ASP.NET, you can capture the exceptions either at a page level or at the application level (global.asax.cs), the force.com platform doesn’t provide such feature as of Oct 2013. Without this feature, there will be always some level of manual exception handling required. We can write helper classes and let helper classes determine whether to silently consume the exception (after logging, …) or add formatted error message to the UI, but that will depend on the requirements and how the system is designed. I too have mixed feelings when it comes to showing the errors to the user – In general we should avoid showing the errors to the users unless and otherwise it is absolutely required. The technique that I follow is to have helper classes and pass the exception and control variables to the exception handler methods and these methods will determine based on the control variables whether to consume or return the exception message. A nice workaround is to implement something like this – this link deals with capturing the exception message from the emails – but this can be extended for the exceptions that we can catch as well. The merit of this approach is that it provides some visibility to the exceptions and provide an opportunity to improve the design/business process to either eliminate or at least reduce the exceptions which might improve the overall usability of the application.

I have yet to really dig into adding an error handling section to Hari’s architecture, but will post back when I do. If anyone beats me to the punch, I’m all ears!

Ok, I just finished implementing some very robust error handling on top of Hari’s framework!

I started by creating a UtilExceptions class that I would be able to instantiate wherever I wanted. It includes things like a List to hold my current list of errors that have accumulated (I make a point not to insert until the call is all done, otherwise it could blow up my DML limit), methods to add different actions as ExceptionLog__c to the List, methods to log the exceptions (async ideally, fallback to sync if already async).

I then instantiate that UtilExceptions class in my TriggerHandlerBase class so I can use it throughout my triggers as just log.whateverMethod()

In my triggers, I tend to have a couple of different places I use the try/catch options. I always encapsulate the entire trigger mainEntry (just inside of it) with a try/catch where the catch will append that error to the List above, and I also include a try catch inside of every for loop so that I can append the object’s before and after state in my log (helps to actually fix the issue instead of just seeing “null pointer exception” and not knowing which one of your million records caused it!)

I’ll try to write up a blog post or two myself on this so I can go into far more detail, with code snippets (there’s just far too much to post here, but I’ll post some abbreviated sections). Bear in mind, that I have some other utilities that I won’t have space to post as well, such as UtilDML, in which I’ve created some insert/update/upsert, etc scripts that include the error handling (try to insert with Database.insert(obj, false) and if any fail, log them in the UtilExceptions List).

public class SubsidiaryTriggerHandlerBeforeInsert extends TriggerHandlerBase {

private List newList = new List();

private Map newMap = new Map();

private void initMaps(TriggerParameters tp) {

newList = (List)tp.newList;

newMap = (Map)tp.newMap;

}

public override void mainEntry(TriggerParameters tp) {

try {

initMaps(tp);

for (Subsidiary__c sub : newList) {

try {

} catch (Exception e) {

log.createAndAppend(‘SubsidiaryTriggerHandlerBeforeInsert’, ‘mainEntry()’, e, sub);

}

}

} catch (Exception e) {

log.createAndAppend(‘SubsidiaryTriggerHandlerBeforeInsert’, ‘mainEntry()’, e);

}

}

}

public with sharing class UtilExceptions {

//—————————-

public List exceptionsToInsert = new List();

//—————————-

public void createAndAppend(String className, String methodName, Exception e) {

exceptionsToInsert.add(ExceptionLogHelper.build(className, methodName, e, errorStackTrace(e)));

}

public void createAndAppend(String className, String methodName, Exception e, sObject thisObject) {

exceptionsToInsert.add(ExceptionLogHelper.build(className, methodName, e, errorStackTrace(e, thisObject)));

}

public void createAndAppend(String className, String methodName, Exception e, sObject oldObject, sObject newObject) {

exceptionsToInsert.add(ExceptionLogHelper.build(className, methodName, e, errorStackTrace(e, oldObject, newObject)));

}

//—————————-

public void logExceptions(String className, String methodName) {

if (exceptionsToInsert.isEmpty())

return;

if (Limits.getDMLRows() >= Limits.getLimitDMLRows() || Limits.getDMLStatements() >= Limits.getLimitDMLStatements()) {

System.debug(‘Failed to insert the Exception Log record. Error: The APEX RUNTIME GOVERNOR LIMITS have been exhausted.’);

return;

}

if (System.isFuture() || System.isBatch() || Test.isRunningTest()) {

logExceptionsSync(className, methodName, exceptionsToInsert);

} else {

logExceptionsAsync(className, methodName, System.JSON.serialize(exceptionsToInsert));

}

exceptionsToInsert = new List();

}

@future

public static void logExceptionsAsync(String className, String methodName, String json) {

logExceptionsSync(className, methodName, (List)System.JSON.deserialize(json, List.class));

}

public static void logExceptionsSync(String className, String methodName, List logs) {

try {

UtilDML.insertMe(className, methodName, null, logs, true);

} catch (Exception e) {

System.debug(‘Failed to insert the Exception Log records. Error: ‘ + e.getMessage());

}

}

//—————————-

public static String errorStackTrace(Exception e) {

return ‘[‘ + e.getLineNumber() + ‘] ‘ + e.getTypeName() + ‘: ‘ + e.getMessage() + ‘\n\n’ + e.getStackTraceString() + ((e.getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getTypeName() + ‘: ‘ + e.getCause().getMessage() + ‘\n\n’ + e.getCause().getStackTraceString() + ((e.getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getStackTraceString() + ((e.getCause().getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getCause().getStackTraceString())));

}

public static String errorStackTrace(Exception e, sObject thisObject) {

return ‘[‘ + e.getLineNumber() + ‘] ‘ + e.getTypeName() + ‘: ‘ + e.getMessage() + ‘\n\n’ + e.getStackTraceString() + ((e.getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getTypeName() + ‘: ‘ + e.getCause().getMessage() + ‘\n\n’ + e.getCause().getStackTraceString() + ((e.getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getStackTraceString() + ((e.getCause().getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getCause().getStackTraceString()))) + ‘\n\nObject: ‘ + thisObject;

}

public static String errorStackTrace(Exception e, sObject oldObject, sObject newObject) {

return ‘[‘ + e.getLineNumber() + ‘] ‘ + e.getTypeName() + ‘: ‘ + e.getMessage() + ‘\n\n’ + e.getStackTraceString() + ((e.getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getTypeName() + ‘: ‘ + e.getCause().getMessage() + ‘\n\n’ + e.getCause().getStackTraceString() + ((e.getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getStackTraceString() + ((e.getCause().getCause().getCause() == null) ? ” : ‘\n\nCaused by:\n\n’ + e.getCause().getCause().getCause().getTypeName() + ‘: ‘ + e.getCause().getCause().getCause().getMessage() + ‘\n\n’ + e.getCause().getCause().getCause().getStackTraceString()))) + ‘\n\nOld Object: ‘ + oldObject + ‘\n\nNew Object: ‘ + newObject;

}

//—————————-

}

public with sharing class ExceptionLogHelper {

//—————————-

public static ExceptionLog__c build(String className, String methodName, Exception e, String errorStackTrace) {

return new ExceptionLog__c(

ClassName__c = className,

MethodName__c = methodName.replace(‘()’, ”),

Type__c = e.getTypeName(),

Line__c = e.getLineNumber(),

Message__c = e.getMessage(),

Details__c = errorStackTrace

);

}

public static ExceptionLog__c build(String className, String methodName, String errorType, Integer errorLine, String errorMessage, String errorStackTrace) {

return new ExceptionLog__c(

ClassName__c = className,

MethodName__c = methodName.replace(‘()’, ”),

Type__c = errorType,

Line__c = errorLine,

Message__c = errorMessage,

Details__c = errorStackTrace

);

}

//—————————-

}

Hi Natham, gorgeous code, I’m wondering, if do you have a public report where I can get the whole code? Thanks again!!

Hey Esteve, I apologize for the delayed response, I only just got the email notification yesterday. I don’t the full code posted anywhere, as this is a minor modification made on top of Hari’s great work, but I should absolutely work on posting it up somewhere for use! I will keep you posted.

Thanks Hari for such a nice detailed article… this is an enlightening one for all our developer community.

[…] be a single class that handles all logic or there could be multiple classes involved like in Hari Krishnan’s excellent framework. In every case, the Trigger itself should never have business logic and should always call to […]

Hi Hari,

I think bulkBefore() and bulkAfter() methods can be implemented in ObjectTriggerDispatcher class to load any cache data. Why didnt these methods take TriggerParameters as a parameter as other methods. I know that we can directly access Trigger.New and other context variables in these methods but just eager to know why they dont take TriggerParameters as parameter so that it will be uniform across all methods. Any specific reason?

Hi Mythili,

Sure, it can be modified to take TriggerParameters as a parameter – I didn’t do that for the simple reason that I didn’t have the need at that time. But you are most welcome to download the code and enhance it to suit your needs.

Best Regards,

Hari K.

Thanks Hari..

How to actually use bulkBefore and bulkAfter methods here??

Thanks for the great article Hari! I’m just wondering if the class variables in TriggerDispatcherBase are necessary. I can’t see why we really need all those conditional statements in TriggerDispatcherBase#execute() method, since handlerInstance implements ITriggerHandler. Code would be more readible.

protected void execute(ITriggerHandler handlerInstance, TriggerParameters tp, TriggerParameters.TriggerEvent tEvent)

if(handlerInstance != null) {

handlerInstance.mainEntry(tp);

handlerInstance.updateObjects();

}

else {

handlerInstance.inProgressEntry(tp);

}

}

Hi Tomas

The static variables and the condition checks are introduced to support the ‘Separation of Concerns’. Also, since we have one handler for each event we need to dispatch the events to the respective handlers based on the event and hence we need separate static variable to hold each of the instance handler objects. The condition checks will make sure the appropriate event handler is invoked for the type of the event got fired. Hope this answers your question.

Best Regards,

Hari K.

Hello Hari,

I`ve been implementing your framework and I like what you accomplished. There was one thing that I couldn`t achieve and that was 100% test coverage without having an artificially DML operation in the handler`s initial entry method so the trigger will be a re entrant one. Just like you demonstrated in the code provided in the package.

What I come up with is overloading the constructor in the handler class that when called from a test method you can pass in boolean parameters for the static variables isBeforeInsertProcessing etc, changing them from false to true.

private static Boolean isBeforeInsertProcessing = false;

//Default constructor

public AddressTriggerDispatcher(){ }

//Overloaded constructor with ability to override the static variables for testing purposes

public AddressTriggerDispatcher(Boolean isBeforeInsertProcessingOverride){

isBeforeInsertProcessing = isBeforeInsertProcessingOverride;

}

So in my unit test I will calling this constructor but your original code will still be using the default constructor. Here is a part of my unit test:

TriggerParameters tp = new TriggerParameters(null,newList,null,null,true,false,true,false,false,false,true,newList.size());

ITriggerDispatcher beforeInsertDispatcher = new ATriggerDispatcher(true);

t.start();

beforeInsertDispatcher.beforeInsert(tp);

t.stop();

This will force the code to go in re etrant mode and assert if the record have been inserted.

I wanted to share this idea for anyone who is trying to get 100% test coverage and if it`s not clear from my comment I will be happy to provide more information.

Let me know your thoughts or if there`s something wrong with unit testing the framework

Thank you,

Mihai

I have an object that, when ‘TriggerDispatcher’ is appended to its name, it is over the 40 char max name length. How can I get this to work (without renaming/recreating my object)?

Why would you want to append to your objects`s name ‘TriggerDispatcher’?. ‘TriggerDispatcher’ is a created as an Apex class, not as a custom object.

He’s not appending “TriggerDispatcher” to the object’s name in the object api name… Where he’s having the issue is that the name of a class has a finite limit (40 characters, if I remember correctly). So as part of Hari’s original setup, you name a class “AccountTriggerDispatcher” but that doesn’t work so well with “ThisIsAReallyLongTableName__cTriggerDispatcher” (46 characters… 6 over the limit). My script below allows you to keep the object api name what you want while allowing Hari’s script to correctly account for it in his framework.

I should also mention that my script also helps remove any trailing __c from custom objects so “ShortName__c” turns into “ShortNameTriggerDispatcher” and also subsequently removes any remaining double underscores to make installed packages more friendly. For instance, there’s an object SBQQ__QuoteLine__c as part of the SteelBrick CPQ installation. As part of my script, that trigger dispatcher is named “SBQQQuoteLineTriggerDispatcher”…

Hey Jojo,

I ran into the same issue and came up with a Custom Setting that solves this. I have a Custom Setting named “TableAbbreviations__c” with one custom field “Abbreviation__c”. For a long table name, you put “Name=ThisIsAReallyLongTableName__c” and “Abbreviation__c=LongTable”. Then, your “getTriggerDispatcher()” method should look like this:

private static TriggerDispatcher getTriggerDispatcher(Schema.sObjectType soType) {

String originalTypeName = soType.getDescribe().getName();

String dispatcherTypeName = null;

TableAbbreviations__c abbr = TableAbbreviations__c.getInstance(originalTypeName);

if (UtilGeneral.isDebug)

System.debug(‘### getTriggerDispatcher().abbr ‘ + abbr);

if (abbr != NULL) {

dispatcherTypeName = abbr.Abbreviation__c + ‘TriggerDispatcher’;

} else if (originalTypeName.toLowerCase().endsWith(‘__c’)) {

Integer index = originalTypeName.toLowerCase().indexOf(‘__c’);

dispatcherTypeName = originalTypeName.substring(0, index).replace(‘__’, ”) + ‘TriggerDispatcher’;

} else {

dispatcherTypeName = originalTypeName + ‘TriggerDispatcher’;

}

Type obType = Type.forName(dispatcherTypeName);

TriggerDispatcher dispatcher = (obType == null) ? null : (TriggerDispatcher)obType.newInstance();

return dispatcher;

}

That first checks if there’s an abbreviation and uses it prior to “TriggerDispatcher. If not, it strips off “__c” by default for any others.

Hope that helps!

The Best Post i have ever seen related to SalesForce.

Hi Hari,

Thanks for the great article. I may not understand the design, but it seems to me that the flags in TriggerDispatcher are not necessary because we can use handler variables in TriggerDispatcherBase to prevent reentrant. For example, I made afterUpdatehandler a public variable and updated afterUpdate() method in AccountTriggerDispatcher as below:

//==============

public virtual override void afterUpdate(TriggerParameters tp) {

/*

if(!isAfterUpdateProcessing) {

isAfterUpdateProcessing = true;

execute(new AccountAfterUpdateTriggerHandler(), tp, TriggerParameters.TriggerEvent.afterUpdate);

isAfterUpdateProcessing = false;

}

else execute(null, tp, TriggerParameters.TriggerEvent.afterUpdate);

*/

if(afterUpdatehandler==null){

afterUpdatehandler = new AccountAfterUpdateTriggerHandler();

afterUpdatehandler.mainEntry(tp);

}else afterUpdatehandler.inProgressEntry(tp);

}

//=============

With this change execute() method in TriggerDispatcherBase is not necessary anymore. Could you advise if there’s anything wrong with this?

Thank you.

Tu Dao

Hello Tu,

I didn’t get a chance to try this out, but I’m afraid this may not work reliably all the time. Also, the reason that I’m setting the isProcessing (for e.g. isAfterInsertProcessing) to false immediately after the execute method is to prevent the unit tests from failing. Try running your unit tests and see if it runs without any issues.

Hi Hari,

I really love your framework and am playing with it now. However, I have one concern regarding the way you use static variables especially in TriggerDispatcherBase. So please help to correct me if I’m wrong here.

In TriggerDispatcherBase, you have several static variables to keep references to handlers. Because they are static so I think there is a chance that AccountAfterInsertTriggerHandler handler will handle the “inProgressEntry” of Contact and vice-versa. And because they are static variables so there is a chance that these handler can be re-assigned to unexpected instance. For example, beforeInserthandler has just been assigned the reference to AccountAfterInsertTriggerHandler and about to process Account but then it is assigned to ContactAfterInsertTriggerHandler handler because there is new trigger for Contact just got fired.

Looking forward to hearing from you.

Thank you.

Ringo

Hello Ringo,

The scenario that you had explained will never occur because of the way the static variables are handled in apex. Unlike in C# / Java (in shared server environments; e.g. web/app server), where once the static variable is initialized during the class load, it gets reset only when the process/server is recycled/restarted; but this is not the case in apex. Static variables are per execution context which means that when user A initiates a request, he gets a fresh session of static variables and when user B initiates a requests (at the same time), he gets another fresh session of static variables. Please check this documentation and my response to a question in the stackexchange. I hope this answers your question.

Hi,

First of all, thank you Hari for your work building such an excellent framework! We have been using it successfully in our org for six months now and achieved a massive improved in terms of general code quality.

Regarding Ringo Cs’s concern though, I believe it is valid. We have a quite complex environment with legacy triggers combined with workflow rules and Process Builder logic, which in turn can lead to situations where triggers are being fired several times for each of the sObjects in the same transaction. As the static handler variables in TriggerDispatcherBase are being assigned only on the first entry, we did end up in a situation where the framework tried to dispatch records of sObject A to trigger event handler of sObject B as the TriggerDispatcherBase was still pointing to the handler of sObject B. This has been the most probable chain of events:

1. First entry to sObject A afterUpdate (-> TriggerDispatcherBase static variable afterUpdatehandler is set to point to sObjectAAfterUpdatehandler)

(… other events here …)

2. First entry to sObject B afterUpdate (-> TriggerDispatcherBase static variable afterUpdatehandler is set to point to sObjectBAfterUpdatehandler)

(… other events here …)

3. Second entry to sObject A afterUpdate (-> In re-entrant code we use the existing afterUpdatehandler in TriggerDispatcherBase which had been set to point to sObjectBAfterUpdatehandler -> Results to sObject type conversion error in the event handler class)

This might be a scenario that would never occur in a tidy org where ideally all code would have been implemented with this framework as even for us, with horrible situation with legacy code, it took several months of using the framework to notice this behaviour (the reason might have been that we hadn’t been implementing the inProgressEntry-methods for many of the sObjects, though). Anyhow, fixing this issue was quite straight forward as I just moved the static handler variables from TriggerDispatcherBase to the corresponding (sObject)TriggerDispatcher classes.

Regards,

Miikka R.

Hi Hari,

Many thanks for your framework: I was trying to implement Dan Appleman’s framework and was struggling with the tests. As you say some tests *may* fail if flags are not correctly defined…

I was wondering if it was possible for you to write a post regarding the ‘inProgressEntry’ method: a couple of examples that could be useful on either a standard re-entry and a cross-object re-entry… so far I have not been able to identify a situation where it would be useful (although I can see its potential).

Thanks again, and best regards,

Sergio

Hi Hari,

Excelent framework! I’m not 100% sure I’m right here, but your diagrams above may have a typo mistake. In both diagrams, I see that the ITriggerDispatcher interface has bulkBefore() and bulkAfter methods, but TriggerDispactcherBase and Triggerdispatcher have bulkBefore() and beforeAfter() instead of bulkBefore() and bulkAfter().

Thanks

[…] methods to nicely fit in with design patterns along the lines of those proposed by either Hari Krishnan in his blog or as described in Dan Appleman’s book titled Advanced Apex Programming Methods […]

Hi Hari,

How do I install this package in sandbox? When I click on the appexchange link it is always trying to install in prod environment even when I replaced login.salesforce.com with test.salesforce.com..Any suggestions?

[…] https://krishhari.wordpress.com/2013/07/22/an-architecture-framework-to-handle-triggers-in-the-force… […]

I was looking for a different trigger architecture when I found this but it looked very promising so I’m giving it a try. I want to be sure I’m adding my helper classes on the right level, which is inside my Account AfterUpdateTriggerHandler, as such:

public class AccountAfterUpdateTriggerHandler extends TriggerHandlerBase {

public override void mainEntry(TriggerParameters tp) {

Account_ContractTasks.checkClaimsStatus(tp.oldMap, tp.newMap); // call to a helper class

}

}

In my static helper class, I’m processing the map, logic, adding records. One of the features I was hoping to use was adding changes to the Account being done via sObjectsToUpdate. However the helper class doesnt see the sObjectsToUpdate. I dont want to put my logic in the Trigger Handler, but I guess I need to pass the Map (or Objects) from my logic handler to my Trigger Handler? Just looking for some best practices on how to use the sObjectsToUpdate, as well as segregate my logic around the trigger handler.

Thanks

This is great stuff Hari. We’ve already started implementing the framework and it works well. Excellent stuff

It seem like a great way to handle trigger but during the implementation i realize that it only dispatch trigger on object level… What if i would need have another layer of dispatching? e.g. i would like to create a new handler for each project or each record type, so can i clearly segregate the business logic. How can triggerFactory getting the correct handler for me?

Thanks!

Hello Wen,

While it sounds interesting with the idea of having more granularity at the record type level, I’m afraid it might be too granular to the effect that it might become too cumbersome to implement. For e.g. if you have 3 record types on an Account object, then would need up to seven event handlers (before insert, before update, before delete, after insert, after update, after delete, undelete) for each record type, which is up to 21 event handlers – and that’s just for one object. More the number of objects and more the number of record types, I think it might become too complex to maintain. Just my 2 cents.

[…] https://krishhari.wordpress.com/2013/07/22/an-architecture-framework-to-handle-triggers-in-the-force… […]

Hi Hari, Quick question. Can we implement TriggerHandleBase functions on BeforeInsert context? For example I want to Delete some records on beforeInsert handler class. Is that possible?

Yes, you can. You just need to implement the TriggerHandlerBase in a new class and in the trigger, make sure you include the event. For e.g your trigger should look like this:

trigger AccountTrigger on Account (before insert, …) {

…

}

Hello Mr. Krishnan,

How would you handle asynchronous updates?

I’m wondering because as a workaround for performing DML’s on system objects and non-system objects in the same context, which is not generally permitted (e.g. user and contact) you can asynchronously perform your DML’s in a separate method annotated with the @future annotation.

However, when trying to add a future method to the TriggerHandlerBase, I found that @future methods are required to be static, and static methods can’t be virtual.

Is it possible that the method in the TriggerHandlerBase will still work without being virtual, or will that ruin the entire idea of this framework? Additionally, being a static method, it’s in a different scope than the variables declared in its parent class so it’s unable to access the “sObjectsToFutureInsert” map I declared.

I’d prefer to keep all DML’s in a single class if possible, but does this need to be handled in the event handler class?

Thanks!

Additionally.

Is there an issue with Afterundelete? It’s not included in the original trigger factory code and when I added it (I also added it to the abstract classes as methods) the trigger factory fell into a recursive loop strangely enough.

I have exactly the same issue. Something is definitely a problem in the framework. I’ve spent hours on this. The trigger factory messages the dispatcher over and over but the afterDelete method in the dispatcher never executes.

I got this to work in the end. I don’t remember how, but I did. If you post your framework, all triggers, and helper classes into a repo I can take a look at it to see what your issue may be.

Hey Hari,

Thanks for this. It’s highly useful.

I am not clear on usage of bulkbefore and bulkafter method. We have not implemented this method anywhere. do we have any use of this method, if we do how can we use it.

Kindly help me understand.

[…] An architecture framework to handle triggers in the Force.com platform […]

[…] Source: An architecture framework to handle triggers in the Force.com platform […]

On the flip side, this pattern introduces a single point of failure, if anything fails…other trigger methods may not run or may also fail. You also lose the ability to enable/disable separate trigger operations. You simply cannot disable one operation and keep other enabled w/o redeploying. All trigger operations will be down until fixes are deployed. There is something to be appreciated with separation and loose coupling. Basic OO design principles may prevail in some scenarios.

Can you explain why you think that this will result in a single point of failure? This is a framework and the only reason that this framework can fail is not because of the code in the framework (governor limits is an exception), but because of the user code. In regards to disabling the trigger, I did update the framework to include the disable feature and in fact it is very useful now, because, now you do not need to add the condition to bypass executing the trigger in each of your triggers, since it is built into the framework itself (it achieves this through a custom setting and code in the trigger handler). Unfortunately, I didn’t get time to publish this update on my blog. If you can point out where it can result in a single point of failure, I would gladly review it and respond to it.

Great Post Hari. I’m working to implement this framework and I see that you could control through Custom Setting, which we are also doing today. Where can I get the latest Code which includes Custom Settings references?

Hi Hari, can you please post the updated framework with the disable trigger logic and custom setting?

[…] Framework code I’m trying to understand. It’s a nice piece of work by Hari Krishnan (you can find it here) and is the framework that I’ve decided to use to build out the triggers needed in an org […]

Hello Hari Krishnan, Have you updated your code, Kindly give me link of latest code.

Hi Hari, I have a basic Question. Say a Trigger in afterUpdate makes a DML statement on other related object, which in turns fires Trigger in the actual Object. Now, since isBeforeInsert boolean is False (in Dispatcher) , it will create a new Handler and execute. How, is it preventing from re-entering the trigger? Apologies for my naive question, but just trying to get the in and out of stuffs.

Hi Hari Krishna,

great article. we are trying to implement this and have a question about on how to use bulkbefore() and bulkafter() and finally() methods.

For example, in my AccountTriggerdispatcher , I want to override the bulkbefore() method to get all the accountid’s to a map based on some logic. I want to be able to use this map in afterinsert and afterupdate event handler classes . 1) How would I be able to do that with this framework. if that is not the purpose of those methods, please describe on how to use these methods .

Thanks

Lalitha

[…] An architecture framework to handle triggers in the Force.com platform […]

Thank you Hari! This was very insightful!

[…] An architecture framework to handle triggers in the Force.com platform […]

Hi, Hari, i’m new to Apex, but the way this is designed and explained, i think i’m going to undertake implement this in the project i’m working… thank you!!!

[…] An architecture framework to handle triggers in the Force.com platform – By Hari Krishnan […]

[…] faltar la propuesta de Hari Krishnan. Este framework no es revolucionario, sinó que cómo explica el mismo autor, fue la culminación de evolucionar las ideas de Tony Scott, Dan Appleman, y […]

thanks hari. it is very good information. But you can provide this in video it is very full to all

Wonderful article Hari! This was fascinating to read and very helpful. Can’t wait to try this “at home” 😉

[…] Hari Krishnan’s Framework […]

[…] Hari Krishnan’s Framework […]

Hi Hari

First of all thanks for sharing this framework. I have started implementing this for my Org, I implemented Before Insert and Before Update on Account and now I am getting this Platform Bug sometimes –

AccountTrigger: execution of BeforeUpdate

caused by: System.UnexpectedException: common.exception.SfdcSqlException: IO Error: The Network Adapter could not establish the connection

Class.AccountTriggerDispatcher.bulkBefore: line 29, column 1

Class.TriggerFactory.execute: line 37, column 1

Class.TriggerFactory.createTriggerDispatcher: line 21, column 1

Trigger.AccountTrigger: line 3, column 1

Any ideas why this would happen?

Hi Hari,

Do you have this as a package so that we can try this out instead of implementing ourselves from scratch. The article is definitely helpful and properly written.

Hi Hari,

That’s a really awesome framework.

Quick question – you are creating dispatcher objects using type.newInstance for every call.

What harm do you see if I put a Map of dispatcher name with trigger dispatcher objects is trigger factory class.

Before doing type.newinstance, I always first check if this map contains a dispatcher object then return that else create a new one, put it in map and return that.

Will the state of this map be persisted across trigger invocations?